|

The goal is to a) keep your bombs intact around your flag and b) bait the enemy into sending a high-value piece through one of the doorways.

Challenge anything coming across with a weak piece you don't mind losing so you know what's coming. The basic idea is to play a waiting game. Immediately adjacent to the other doorway, place your Spy and a 4.ģ) Put a couple of key pieces inside the fortress as well, including your 2 and a couple of 8s. There should be two "doorways" on either side.Ģ) Immediately adjacent to one doorway, place your 1 and a 4. Well, here was my unbeatable strategy for our variation, but you can see how this might help you under the official rules:ġ) Place your flag in the middle of the board mostly surrounded by bombs. I also seem to remember that in our version, a Spy could kill a 1, 2, or 3, but lost to a 4 and below.but I think the official rules claim that he can only take out a 1. (In other word, the tie goes to the aggressor.) In the official rules, however, both pieces are lost. In our game, when there is a tie, the piece which MOVED wins.

Perolat et al., Science 378, 990, 2022.I had sent demon a PM, but I can reprint it here.Īfter doing some research, I found out that the rules that my family has always played under are actually a variation of the official rules. Over the thousands of games played, DeepNash won 97% of the time against other AI bots and 84% of the time against human experts. In some games, DeepNash purposefully chose not to move certain pieces, which left its opponent unsure of the rank of those pieces. In Stratego, players can often gain an advantage by knowing more private information than their opponents, even if they have fewer pieces on the board. When playing Stratego, DeepNash made several humanlike tactical decisions. In the third and final step, the previous transformation of the game is updated with the solution found in step two.

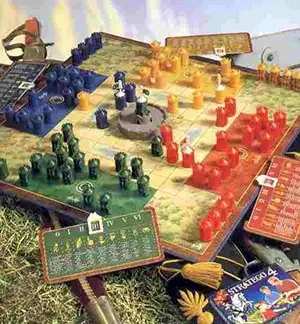

Step two takes the simplified, transformed game and converges on a possible winning solution. Akin to an error-minimization technique, regularization discourages a newly learned set of actions from deviating too far from the policy assigned at the beginning of the iteration. In the first step, the game is transformed into a simplified decision-making situation in which two agents each take some action according to a regularization policy added to the game. The researchers approached the challenge by building DeepNash as a deep-learning neural network, which improves the algorithm’s skill by having it play against itself, combined with a type of algorithm known as Regularized Nash Dynamics.Īt its simplest, the Regularized Nash Dynamics algorithm is an iterative process consisting of three steps. Many games, including Stratego, can have multiple Nash equilibria, and oftentimes researchers will develop an opponent model that tracks all the possible game states that could form from various moves and the likelihood of the player making each of those moves on a particular turn.īut calculating all those possibilities in an imperfect-information game quickly becomes too computationally expensive. In that approach, the algorithm finds a winning strategy by applying a set of tactical moves that can’t be exploited by the opponent. Now a DeepMind team led by Julien Perolat, Bart De Vylder, and Karl Tuyls has developed an algorithm called DeepNash that plays Stratego at the level of a human expert.ĭeepNash plays at a highly competitive level by finding a Nash equilibrium. The research laboratory DeepMind Technologies became famous in 2016 when its AlphaGo algorithm beat Go world champion Lee Sedol in a five-game match. (Only during an interaction between pieces do their ranks become known.) For an AI algorithm to win, it must make a series of long-term strategic moves and analyze a staggering 10 60 times as many starting arrangements as a two-player game of Texas Hold ’em. Two players each control 40 pieces: A piece can capture one of lower rank, but the specific ranks of the opponent’s pieces are unknown. As in capture the flag, each player guards their flag and tries to capture their opponent’s.

That number, however, is but a fraction of the 10 535 possible states for the board game Stratego. Despite the added complexity of the game compared with perfect-information games like chess, in 2015 artificial intelligence (AI) researchers designed a game-winning strategy for Texas Hold ’em, a variation of poker with 10 164 possible game states. Because each player has a set of starting cards that others can’t see, a player can bluff. Credit: zizou man, Wikimedia Commons, CC BY 2.0Ī game like poker is one with imperfect information. An opening arrangement of the imperfect-information game Stratego.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed